HOSS: Hall-D Online Skim System

Introduction

The HOSS system integrates the last leg of raw data transport in the Hall-D counting house and generation of skim files using gluon farm computers. A brief summary is:

- DAQ (CODA) system writes raw data to a large RAM disk on a buffer server

- HOSS distributes raw data from buffer to one of several RAID servers

- HOSS sends copy of raw data to one of several farm nodes to produce skim files

HOSS is now a critical component in storing the raw data. If it fails, the RAM disk will fill and the DAQ system will stop taking data. However, mechanisms are built in such that if the skimming fails, the raw data transport can still proceed.

(see below for more details)

The system is driven by a configuration file that can be set to an arbitrary level of complexity. The simplest configuration would just distribute the raw data files over several RAID partitions.

Presentations

Quick Reference

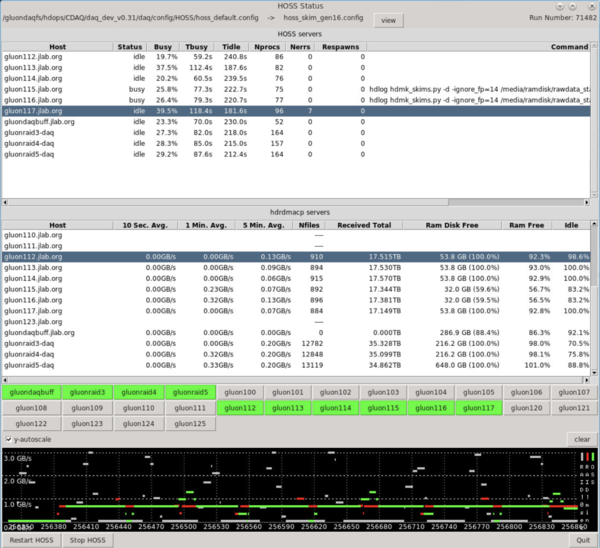

Users may monitor and interact with the HOSS system using a GUI program that may be started with the following when logged into the 'hdops account on the gluons. A screenshot of the GUI window can be seen below.

- ssh gluon47

- start_hoss_mongui

Features to be aware of:

- Only a subset of the buttons in the lower half of the screen will be colored. These are the nodes in the current configuration

- The HOSS process on a single host can be restarted by right-clicking on the button and selecting restart from the pop-up menu. Do this if the button is red for more than a few seconds.

- The "Restart HOSS" button at the bottom of the screen can be used to kill all HOSS processes and restart them. This is usually never needed since all processes are automatically restarted when a new run starts. If an individual process needs to be restarted, it is preferred to just restart that one.

Website:

A HOSS DB website exists that can be viewed from offsite or onsite to see the trigger statistics and event ranges for every file HOSS processes. Through it one can also browse details every file transferred through the HOSS system, including skim files it creates.

Restarting from the command line

Here is how to restart HOSS. This will read the current configuration and stop any worker processes first, then start them all up again. Note that this is automatically run from the run_download script CODA runs during download.

start_hoss -r

HOSS Configuration

Here is how to use some pre-made HOSS configurations for common scenarios. For all of these you need to make the symbolic link: $DAQ_HOME/config/HOSS/hoss_default.config point to a specific file in the $DAQ_HOME/config/HOSS/ directory. Before you do that though, you should make sure all of the hoss programs under the current configuration are stopped. Start with these commands and then choose from the examples below to setup a new configuration:

start_hoss -e # stops existing HOSS processes cd $DAQ_HOME/config/HOSS rm hoss_default.config

DISABLE HOSS

This empty configuration will effectively disable the HOSS system completely/

ln -s hoss_disable.config hoss_default.config point

TRANSPORT RAW DATA + SKIMS (default)

This is the default configuration that will transport the raw data, scan the files for L1 trigger info to enter into the DB, and produce the full set of skim files.

ln -s hoss_skim_gen16.config hoss_default.config point

TRANSPORT RAW DATA + DB ENTRY

This configuration will transport the raw data and scan the files for L1 trigger info to enter into the DB. It will skim the production of skim files. n.b. the raw data is still transported to the gluon farms for the trigger scan, but that will go much faster and use much less CPU than producing the full skims.

ln -s hoss_RAID+DB_gen16.config hoss_default.config point

TRANSPORT RAW DATA ONLY

This configuration will only transport raw data to the RAID servers. No processes are run by HOSS on the gluon farm nodes. Note that if you choose this, then you may want to modify the RootSpy configuration to use the farm nodes normally dedicated to HOSS.

ln -s hoss_RAIDonly.config hoss_default.config point

Details

Operations

The HOSS system is made up of two types of processes. The hdrdmacp server which receives files via RDMA and the start_hoss_worker process which does everything else.

The hdrdmacp servers are run as a service on all gluon computers that have an IB interface. This server is automatically managed by the OS and generally will never need to be restarted.

The start_hoss script is run by the $DAQ_HOME/scripts/run_download script that is run automatically by CODA. This master script will read the current HOSS configuration file ($DAQ_HOME/config/HOSS/hoss_default.config) and run a start_hoss_worker instance on all hosts in the configuration. The worker processes will run indefinitely, until explicitly killed by running "start_hoss -e". The only time "start_hoss -e" is run automatically is in $DAQ_HOME/scripts/run_download just before running it without the "-e" option. It is done there to clear out old processes and ensure a fresh start. Note that any files currently being processed by HOSS when that happens will be abandoned.

Default Configuration

The default HOSS configuration file is $DAQ_HOME/config/HOSS/hoss_default.config. This should generally be a symbolic link pointing to another file in the same directory. This allows the default configuration to be easily changed via the filesystem.

IMPORTANT: If the symlink is modified while HOSS is running, then "start_hoss -e" may not kill all existing processes! When "start_hoss -e" is run to kill existing processes it will first read the configuration file to determine which hosts to issue pkill commands to. If the new configuration uses a different set of hosts than the previous one then some process will not be killed. Before modifying the default configuration symlink one should generally run "start_hoss -e" first, then modify the link.

Python Environment

The start_hoss_worker script is actually just a wrapper for the python script hd_data_flow.py which does all of the work. The job of the wrapper is to source a central python virtual environment to use for running hd_data_flow.py. The location of the virtual environment used is taken from the HOSS_VENV environment variable which is set in the /gluex/etc/hdonline.cshrc file. This currently points to /gapps/python/VENV/hoss_20191007/venv.

The purpose of using a central virtual environment is two-fold: 1. It allows instantaneous distribution of python packages as opposed to installing them at the system level on dozens of gluon computers. 2. It allows custom environments with packages/versions needed for specific scripts.

Source Code

The source code for the HOSS system is maintained in the subversion repository here:

https://halldsvn.jlab.org/repos/trunk/online/packages/HDdataflow

On the gluons, it is in the directory:

/gluex/builds/devel/packages/HDdataflow

The configuration files are stored in

~hdops/CDAQ/daq/config/HOSS